Notifications

11 minutes, 43 seconds

-8 Views 0 Comments 0 Likes 0 Reviews

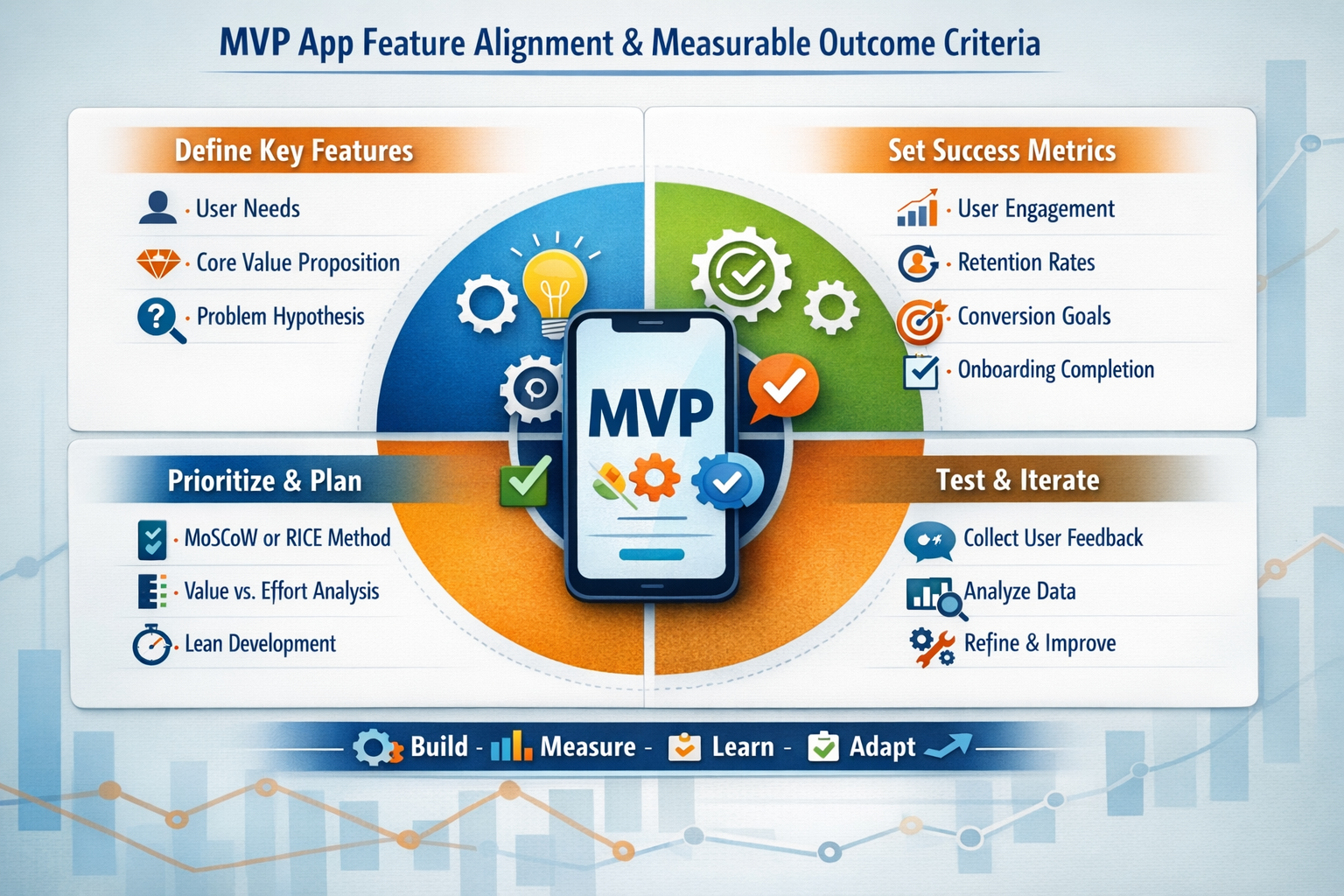

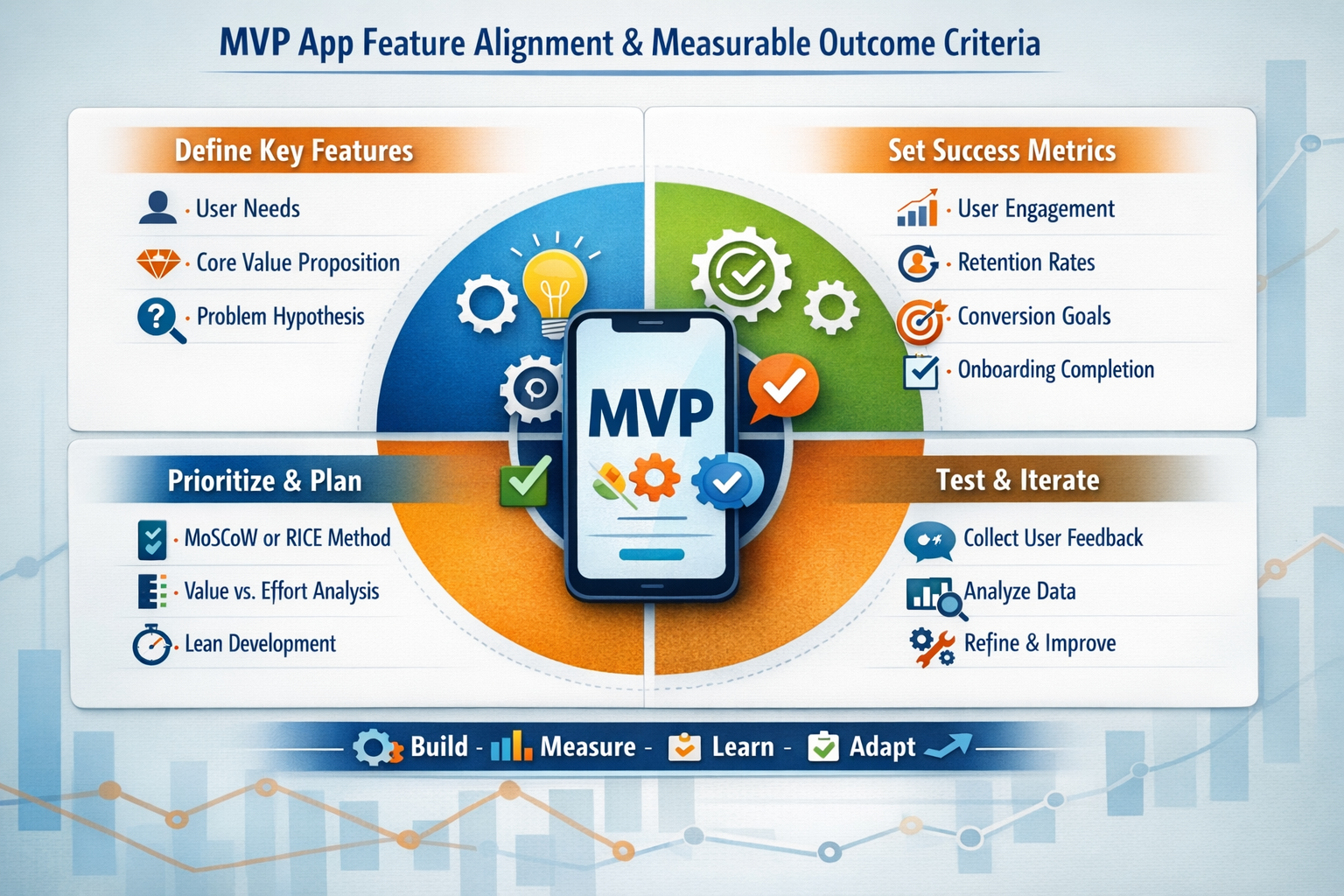

Launching a digital product without validated direction often results in wasted capital and delayed market entry. A Minimum Viable Product provides a structured way to test assumptions while limiting exposure to risk. However, success depends on precise feature alignment and clearly defined outcome criteria. Organizations investing in MVP App Development Services must balance speed, scope, and measurable impact. This article explores how to structure feature prioritization, establish evaluation metrics, and create a disciplined execution framework that drives meaningful product validation.

An MVP is not a simplified version of the final product; it is a validation mechanism. Its primary objective is to test a core value proposition under real market conditions. Every feature included must serve that objective.

Strategic purpose begins with identifying:

The primary user persona

The specific problem being solved

The unique value proposition

The measurable behavioral outcome expected

Feature scope should directly support problem-solution validation. If a feature does not contribute to testing a critical assumption, it should be excluded from the initial build.

A disciplined scope definition process includes:

Documenting product hypotheses.

Mapping each hypothesis to at least one measurable metric.

Assigning features that validate those metrics.

Eliminating secondary enhancements.

Feature creep at the MVP stage distorts results. When unnecessary functionality is added, user behavior becomes harder to interpret. Precision in scope ensures clarity in learning.

Without predefined success criteria, MVP results are subjective. Validation requires quantifiable benchmarks established before development begins.

Common categories of MVP metrics include:

Engagement Metrics

Daily or weekly active users

Session duration

Feature interaction rates

Acquisition Metrics

Cost per acquisition

Conversion from visitor to registered user

Onboarding completion rates

Retention Metrics

7-day or 30-day retention

Churn rate

Repeat usage frequency

Each metric must align with a hypothesis. For example, if the hypothesis states that users value a streamlined booking process, completion rate and time-to-complete become primary metrics.

The role of MVP App Development Services in this phase is to embed analytics architecture during development rather than retrofitting it later. Instrumentation planning should be part of technical design documentation, ensuring that every interaction generates actionable data.

Clear thresholds must also be defined. A measurable outcome should specify numerical targets, such as achieving a 25 percent onboarding completion rate within the first month. Ambiguous targets undermine decision-making.

Feature prioritization demands structured evaluation rather than intuitive selection. Several frameworks support objective decision-making.

MoSCoW Method

Must-have features

Should-have features

Could-have features

Won't-have features

RICE Scoring

Reach

Impact

Confidence

Effort

Value versus Complexity Matrix

High value, low complexity

High value, high complexity

Low value, low complexity

Low value, high complexity

Each framework reduces emotional bias and emphasizes measurable impact. For MVP alignment, must-have or high-value low-complexity features should dominate the scope.

Additionally, cross-functional collaboration between product managers, designers, engineers, and data analysts ensures feasibility and measurable output. Engineering constraints and UX considerations should influence prioritization decisions, preventing misalignment between strategic ambition and technical reality.

A documented prioritization matrix also serves as a reference point during stakeholder discussions, reducing the likelihood of scope expansion mid-development.

User journey mapping translates strategic objectives into practical interaction flows. It ensures that the MVP validates real behavioral pathways rather than isolated features.

A structured journey map includes:

Entry point into the application.

Initial interaction or onboarding step.

Core value interaction.

Confirmation or feedback stage.

Retention trigger.

Each stage must have defined success indicators. For example, if the primary value lies in enabling peer-to-peer transactions, the journey should focus on discovery, transaction initiation, and successful completion.

During MVP planning, eliminate alternate pathways that distract from the primary validation goal. Secondary flows can be incorporated in later iterations once the core hypothesis is validated.

This mapping process also identifies friction points early. For instance:

Excessive data entry requirements

Confusing navigation

Redundant confirmation screens

By refining the journey, teams reduce noise in user behavior data and obtain clearer insights.

One of the primary constraints of MVP development is resource allocation. Speed to market must be balanced against technical robustness and budget discipline.

When evaluating MVP App Development Cost, organizations should analyze:

Infrastructure requirements

Backend scalability expectations

Third-party integrations

Security compliance standards

Data storage and analytics setup

Underinvestment can lead to unstable systems that distort validation results. Overinvestment, on the other hand, reduces the financial advantage of iterative testing.

Teams must differentiate between temporary technical compromises and structural risks. For example, simplified UI design is acceptable for validation, but insecure data handling is not.

Technology choices also influence development timelines. Some organizations adopt no code app development platforms to accelerate prototyping and reduce engineering overhead. While these tools offer rapid iteration capabilities, they may limit customization or scalability. Decision-makers must evaluate whether flexibility constraints align with long-term product vision.

Strategic cost allocation should prioritize core functionality, analytics integration, and performance stability.

An MVP is valuable only if feedback informs iteration. Data collection mechanisms should be both quantitative and qualitative.

Quantitative inputs include:

Event tracking analytics

Funnel analysis

Cohort performance tracking

Qualitative inputs include:

User interviews

In-app surveys

Behavioral observation sessions

Feedback loops should operate on defined cycles, such as biweekly or monthly review sessions. During these sessions, teams compare actual metrics against predefined targets and determine whether hypotheses are validated, partially validated, or invalidated.

Iteration decisions typically fall into three categories:

Persevere with minor adjustments.

Pivot strategic direction.

Terminate the concept.

This structured evaluation prevents emotional attachment from influencing decisions. Instead, measurable outcomes drive roadmap adjustments.

Transparent documentation of findings ensures organizational learning. Even invalidated hypotheses provide insight that can inform future product strategies.

Feature creep is one of the most significant threats to MVP alignment. It often arises from stakeholder enthusiasm, competitive pressure, or internal assumptions about user expectations.

To mitigate scope expansion:

Maintain a documented feature freeze after planning approval.

Require formal review for any additional feature request.

Tie new feature proposals to measurable hypotheses.

Quantify incremental development time and cost impact.

Scope creep not only delays launch but also contaminates validation metrics. When multiple new features are introduced simultaneously, isolating performance drivers becomes difficult.

Governance structures can help maintain discipline. A designated product owner should have final authority on scope changes. Cross-functional review boards can assess whether proposed changes align with validation objectives.

Additionally, maintaining a backlog of deferred features reassures stakeholders that ideas are not dismissed permanently but scheduled for future evaluation.

Although an MVP is minimal, it should not be architecturally fragile. Foundational technical decisions influence scalability, maintainability, and data integrity.

Key architectural considerations include:

Modular code structure

API-first design

Cloud-native infrastructure

Data security compliance

Automated testing frameworks

Selecting appropriate mobile app development solutions requires alignment with expected growth patterns. Even if user volume is initially small, architecture should support scaling without full system reconstruction.

Analytics architecture is equally critical. Event schemas must be standardized, and data pipelines should enable real-time or near-real-time analysis.

Performance monitoring tools should track:

Server response time

Error rates

Crash frequency

Load handling capacity

A technically stable MVP ensures that user behavior reflects product-market fit rather than system limitations.

Once validation metrics are collected, structured analysis determines next steps. Data interpretation must be systematic rather than anecdotal.

A post-launch decision framework may include:

Reviewing hypothesis validation status.

Segmenting users by behavior patterns.

Identifying friction points in conversion funnels.

Prioritizing enhancements based on impact magnitude.

Planning controlled feature rollouts.

Cohort analysis is particularly valuable. Observing retention differences across user segments provides insight into which audience profiles demonstrate stronger alignment with the value proposition.

A/B testing can further refine product direction. Controlled experiments isolate variables and measure performance differences between feature variants.

Long-term growth strategy should be based on validated insights rather than assumptions. Incremental expansion of functionality should follow demonstrated demand, ensuring sustainable scaling.

A disciplined approach to feature alignment and measurable outcome criteria transforms a minimum viable product from a simple prototype into a strategic validation instrument. Clear hypotheses, structured prioritization, defined metrics, and controlled iteration cycles create a reliable decision-making framework. By focusing on data integrity, architectural stability, and governance discipline, organizations can reduce uncertainty and maximize learning efficiency. When validation is treated as a systematic process rather than an experimental guess, product development becomes significantly more predictable and strategically informed.